Building AI systems in healthcare isn’t just a technical challenge. It’s a regulatory one.

In most industries, data pipelines focus on:

- Scalability

- Performance

- Cost

In US healthcare, everything revolves around:

- Compliance

- Privacy

- Traceability

If your AI pipeline mishandles patient data, it’s not just a bug, it’s a legal risk.

This is where ADLC (AI-driven software development lifecycle) becomes critical. It ensures that compliance, security, and auditability are built into the system, not added later.

Understanding the Basics: HIPAA and PHI

What is HIPAA?

The Health Insurance Portability and Accountability Act is the primary US law governing patient data protection.

It defines how healthcare data should be:

- Stored

- Processed

- Shared

HIPAA applies to:

- Healthcare providers

- Insurance companies

- Health tech platforms

What is Protected Health Information (PHI)?

PHI includes any data that can identify a patient, such as:

- Names

- Addresses

- Medical records

- Lab results

- Device identifiers

Even partial data can qualify as PHI if it can be linked back to an individual.

Why AI Data Pipelines Are High Risk in Healthcare

AI pipelines typically:

- Ingest large datasets

- Transform and enrich data

- Feed models for predictions

In healthcare, this creates risks like:

- Unauthorized access

- Data leakage

- Lack of traceability

Without proper design, AI systems can easily violate HIPAA.

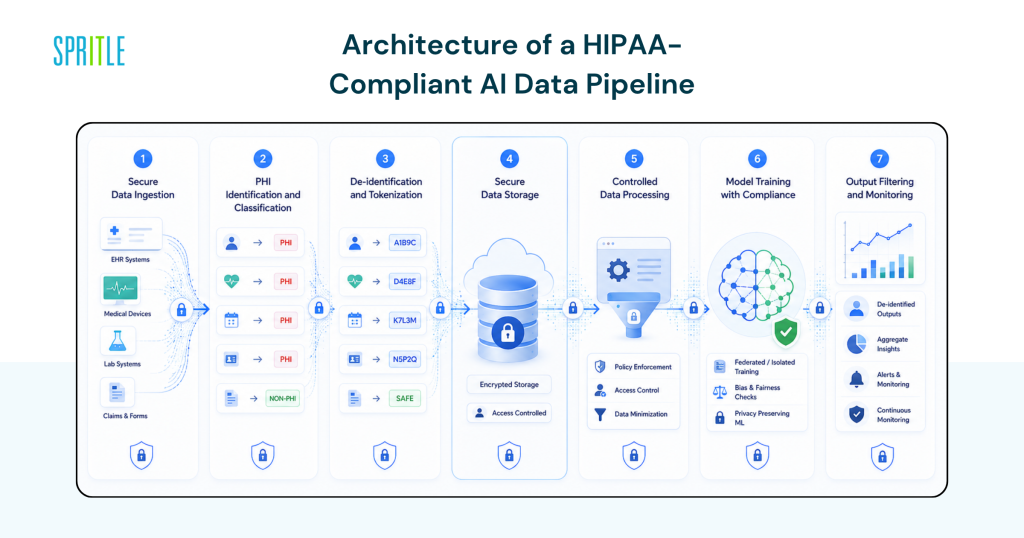

Architecture of a HIPAA-Compliant AI Data Pipeline

A compliant pipeline isn’t just about encryption—it’s about end-to-end control.

1. Secure Data Ingestion

Data enters the system from:

- EHR systems

- APIs

- Medical devices

Best practices:

- Use encrypted channels (TLS)

- Validate data sources

- Apply strict authentication

2. PHI Identification and Classification

Before processing:

- Detect PHI fields automatically

- Tag sensitive data

AI pipelines should include:

- Data classification layers

- Schema validation

3. De-identification and Tokenization

To safely use data for AI:

- Remove identifiers (de-identification)

- Replace with tokens (tokenization)

This ensures:

- Models don’t directly access PHI

- Data remains usable for training

4. Secure Data Storage

HIPAA requires:

- Encryption at rest

- Access control mechanisms

Use:

- Role-Based Access Control (RBAC)

- Attribute-Based Access Control (ABAC)

5. Controlled Data Processing

During transformations:

- Limit PHI exposure

- Use secure compute environments

Examples:

- Isolated processing containers

- Encrypted memory handling

6. Model Training with Compliance

AI models should:

- Avoid memorizing PHI

- Use anonymized datasets

Techniques:

- Differential privacy

- Federated learning

7. Output Filtering and Monitoring

Before exposing results:

- Ensure no PHI leaks in outputs

- Validate responses

This is especially critical for:

- AI assistants

- Clinical decision tools

Audit Logs: The Backbone of Compliance

What Are Audit Logs?

Audit logs track:

- Who accessed data

- When it was accessed

- What actions were performed

They are mandatory under HIPAA.

What Should Be Logged?

Every pipeline must record:

- Data access events

- Data modifications

- Authentication attempts

- System errors

Key Features of Healthcare Audit Logs

1. Immutability

Logs must be:

- Tamper-proof

- Write-once

2. Granularity

Capture:

- User-level actions

- Field-level changes

3. Real-Time Monitoring

Detect:

- Suspicious activity

- Unauthorized access

Example Audit Flow

- Doctor accesses patient record

- System logs:

- User ID

- Timestamp

- Data accessed

- AI model processes anonymized data

- Output is logged and validated

This ensures full traceability.

How ADLC Ensures Compliance by Design

Traditional pipelines:

- Add compliance later

ADLC pipelines:

- Build compliance into every stage

Continuous Compliance Checks

- Automated policy validation

- Real-time alerts

AI Lifecycle Governance

- Track data lineage

- Monitor model behavior

Automated Documentation

- Generate compliance reports

- Simplify audits

Common Mistakes in Healthcare AI Pipelines

Storing Raw PHI in Training Data

Risk:

- Data leaks

- Legal violations

Weak Access Controls

Risk:

- Unauthorized access

Missing Audit Trails

Risk:

- Failed compliance audits

Overlooking Output Leakage

AI responses may:

- Accidentally expose PHI

Best Practices for Building Secure Pipelines

Minimize PHI Usage

Only collect:

- What is absolutely necessary

Encrypt Everything

- Data in transit

- Data at rest

Implement Zero Trust Architecture

- Verify every access request

- No implicit trust

Regular Audits and Testing

- Conduct compliance checks

- Simulate attack scenarios

Real-World Applications

Clinical Decision Support Systems

AI analyzes:

- Patient history

- Lab results

While ensuring:

- PHI protection

Remote Patient Monitoring

Devices send:

- Real-time health data

Pipeline ensures:

- Secure ingestion

- Continuous monitoring

Healthcare Chatbots

AI interacts with patients:

- Answers queries

- Provides guidance

Must ensure:

- No PHI leakage in responses

FAQ

Q: What is PHI in AI pipelines?

A: PHI is any patient-identifiable data that must be protected under HIPAA during collection, processing, and storage.

Q: How do audit logs help in compliance?

A: They provide traceability of all data access and actions, which is required for HIPAA audits and security monitoring.

Q: Can AI models be trained on PHI?

A: Yes, but only with strict safeguards like de-identification, consent, and secure environments.

Q: What is the role of ADLC in healthcare AI?

A: ADLC ensures compliance, security, and governance are integrated into every stage of the AI pipeline.

Conclusion

AI in healthcare is powerful—but also heavily regulated.

To build reliable systems, teams must go beyond performance and focus on:

- Compliance

- Data protection

- Auditability

By integrating these into the AI-driven software development lifecycle, organizations can create AI pipelines that are not only intelligent—but also secure, compliant, and trustworthy.

In healthcare, that’s not optional. It’s essential.