Artificial Intelligence is no longer a futuristic concept or an experimental capability. In 2026, AI has firmly embedded itself into core business operations—powering decisions in hiring, finance, healthcare, customer experience, and beyond.

This shift brings a fundamental change: AI risk is now business risk.

For Quality Engineering teams, especially QA leaders, this marks a turning point. The role is evolving from validating functionality to ensuring trust, safety, and compliance of intelligent systems.

From Experimentation to Enforcement

For years, organizations approached AI with curiosity—running pilots, proofs of concept, and isolated use cases. That phase is over.

2026 is the year where AI regulation is actively enforced across regions. Frameworks like the EU AI Act and evolving global compliance standards now require organizations to demonstrate:

- Clear governance structures

- Risk assessment mechanisms

- Auditability of AI decisions

- Continuous monitoring systems

It’s no longer enough to say “we use AI responsibly.”

Organizations must now prove it—with evidence.

AI Governance Is Now Enterprise Governance

One of the biggest mindset shifts is that AI governance cannot exist in isolation.

It must integrate deeply with:

- Enterprise Risk Management

- Cybersecurity frameworks

- Data governance policies

- Vendor and third-party risk systems

AI systems interact with multiple layers of enterprise architecture, making governance a cross-functional responsibility.

For QA teams, this means working closely with:

- Security teams

- Data engineering teams

- Compliance and legal departments

Testing is no longer confined to applications—it now spans entire ecosystems.

Understanding the New Dimensions of AI Risk

Unlike traditional software, AI introduces complex and multi-dimensional risks:

- Bias and fairness issues → impacting real-world decisions

- Data privacy risks → due to large-scale data usage

- Model inaccuracies and hallucinations → leading to incorrect outputs

- Regulatory non-compliance → exposing organizations to penalties

Each of these risks requires different validation strategies.

Traditional test cases alone are not enough.

QA must evolve toward:

- Behavioral testing

- Ethical validation

- Risk-based testing approaches

Compliance Is Now Continuous

Compliance in the AI era is not a one-time certification activity—it is an ongoing process.

Organizations are expected to implement:

- Continuous monitoring of AI systems

- Regular risk assessments

- Lifecycle governance from training to deployment

- Real-time anomaly detection

This introduces a new paradigm:

“Compliance as a continuous engineering practice.”

QA teams are uniquely positioned to operationalize this through automation, observability, and validation pipelines.

Auditability and Traceability Are Mandatory

One of the strongest requirements in 2026 is auditability.

Organizations must be able to answer:

- Why did the AI make this decision?

- What data was used?

- Which model version was deployed?

- What controls were in place?

This demands:

- Detailed documentation

- Logging and traceability mechanisms

- Transparent model behavior

For QA, this translates into validating not just outputs—but also decision explainability and trace logs.

Privacy by Design Is No Longer Optional

Privacy is now a foundational requirement in AI systems.

It must be embedded across:

- Data collection pipelines

- Model training processes

- Deployment architectures

“Privacy by design” ensures that systems are compliant by default, not as an afterthought.

QA teams must validate:

- Data minimization practices

- Consent handling

- Data masking and anonymization

The Cost of Getting It Wrong

The consequences of poor AI governance are no longer theoretical.

Organizations now face:

- Regulatory penalties

- Financial losses

- Reputational damage

- Loss of customer trust

As AI systems become more autonomous, the risks scale faster—and so does the impact.

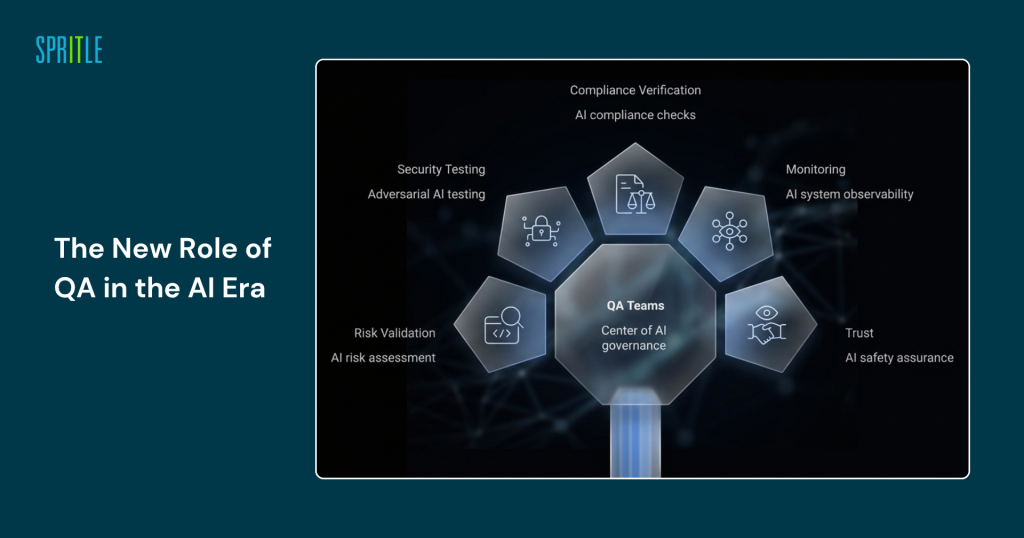

The New Role of QA in the AI Era

This transformation positions QA teams at the center of AI governance.

The role is expanding to include:

- AI risk validation

- Security and adversarial testing

- Compliance verification

- Monitoring and observability

- Trust and safety assurance

In essence, QA is evolving into a guardian of responsible AI.

Final Thoughts

AI in 2026 is not just about innovation—it’s about accountability.

Organizations that succeed will not be the ones that adopt AI the fastest, but the ones that adopt it safely, responsibly, and transparently.

For QA leaders, this is an opportunity to step beyond traditional boundaries and play a strategic role in shaping the future of technology.

Because in the age of AI,

quality is no longer just about correctness—it’s about Trust.